Apache flume3/1/2023

To attain high reliability, AI-K-means neural network (KNN) and convolution neural network (CNN) is used initiallyįor pre-processing and filtering the edge node. (E-Node) algorithm is implemented to optimize the edge to edge learningįor well-organized data migration. These challenges, an innovative artificial intelligence (AI) based edge node Improving the data communication among geo-distributed devices receivedįrom the cloud server but still, it lags in low learning performance. Even though numerous technologies have emerged for Smart cities where distributed things have access to computational resources,ĭata transfer becomes inevitable because of high latency, thereby resulting Near to the user location present at the edge of the cloud server. The authors believe that this big data pipeline will greatly help in efficient storage of IoT application medical data and will provide a viable solution for effective processing and predicting disease from medical IoT data.Įdge computing technology has drawn the keystone of future intelligent transportation systems, especially in smart cities, because of processing data that are Next the authors propose the use of hybrid prediction model of Density-based spatial clustering of applications with noise (DBSCAN) to remove sensor data outliers and provide better accuracy in diabetes disease detection by using Random Forest machine learning classification technique.

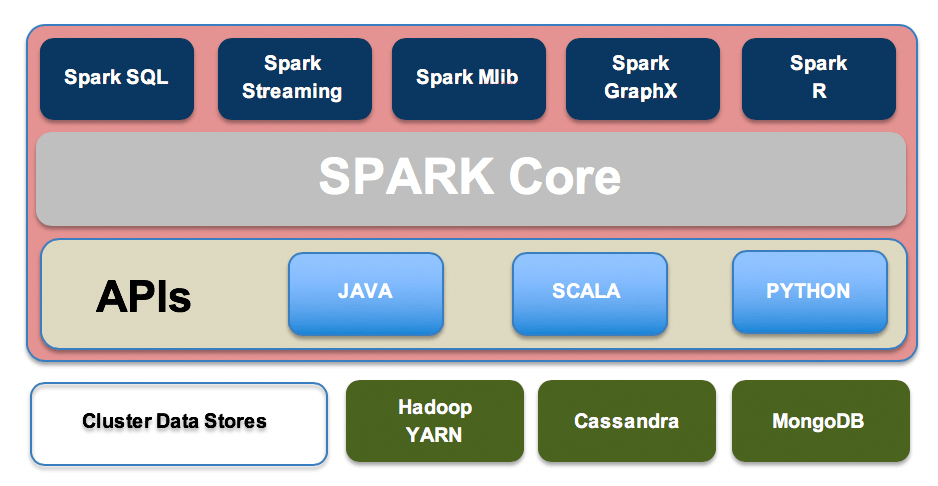

Recursive Feature Elimination with Cross Validation (RFECV) is used for eliminating the features of less importance.Apache Spark is to be used for processing this real time data. The proposed big data processing platform uses Apache Flume for efficiently collecting and transferring large amounts of IoT data from Cloud based server into Hadoop Distributed File System for storage of IoT based sensor medical data. In this chapter, the authors are proposing a new big data pipeline solution for storing and processing IoT medical data. However this real time medical based data storage and its processing in IoT applications is still a big challenge. This data is real time and unstructured in nature. Therefore, all this could contribute to errors in future medical decisions.Īs the lot of data is getting generated and captured in Internet of Things (IoT) based devices related to Health Care Systems. It is not unlikely that these flawed data have been polluting biobanks for some time before stringent conditions for the veracity of data were implemented in Big data. We show, as a second example, how all this distorts studies in cellular hepatocellular carcinoma.

Consider data from patients with cancer: from biopsy procedures to experimental tests, to archiving methods and computational algorithms, these are continuously handled so require critical and continuous “updates” to obtain reproducible, reliable, and correct results.

Omics data and networks are essential parts of this step but also have flawed protocols and errors. The next step is the integration of data and information from different biobanks. We provide an example of how protein–protein networks today have space-time limits. Data curation is an essential process in annotating new functional data to known genes or proteins, undertaken by a biobank curator, which is then reflected in the calculated networks. In network science, the principal intrinsic problem is how to integrate the data and information from different experiments on genes or proteins. Big-data storage centers, supercomputing systems, and increased algorithmic efficiency allow us to analyze the ever-increasing amount of data generated every day in biomedical research centers. Biomedical institutions rely on data evaluation and are turning into data factories.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed